However, achieving this in a user friendly, affordable way is complicated. As a result Ladder had taken the approach of requiring some users to complete lab work, in order to provide the most compelling offers. But for this set of users, the experience was not great.

In order to achieve this, we would analyze our current traffic performance, learn about potential opportunities, and ultimately launch a test aiming to improve the experience.

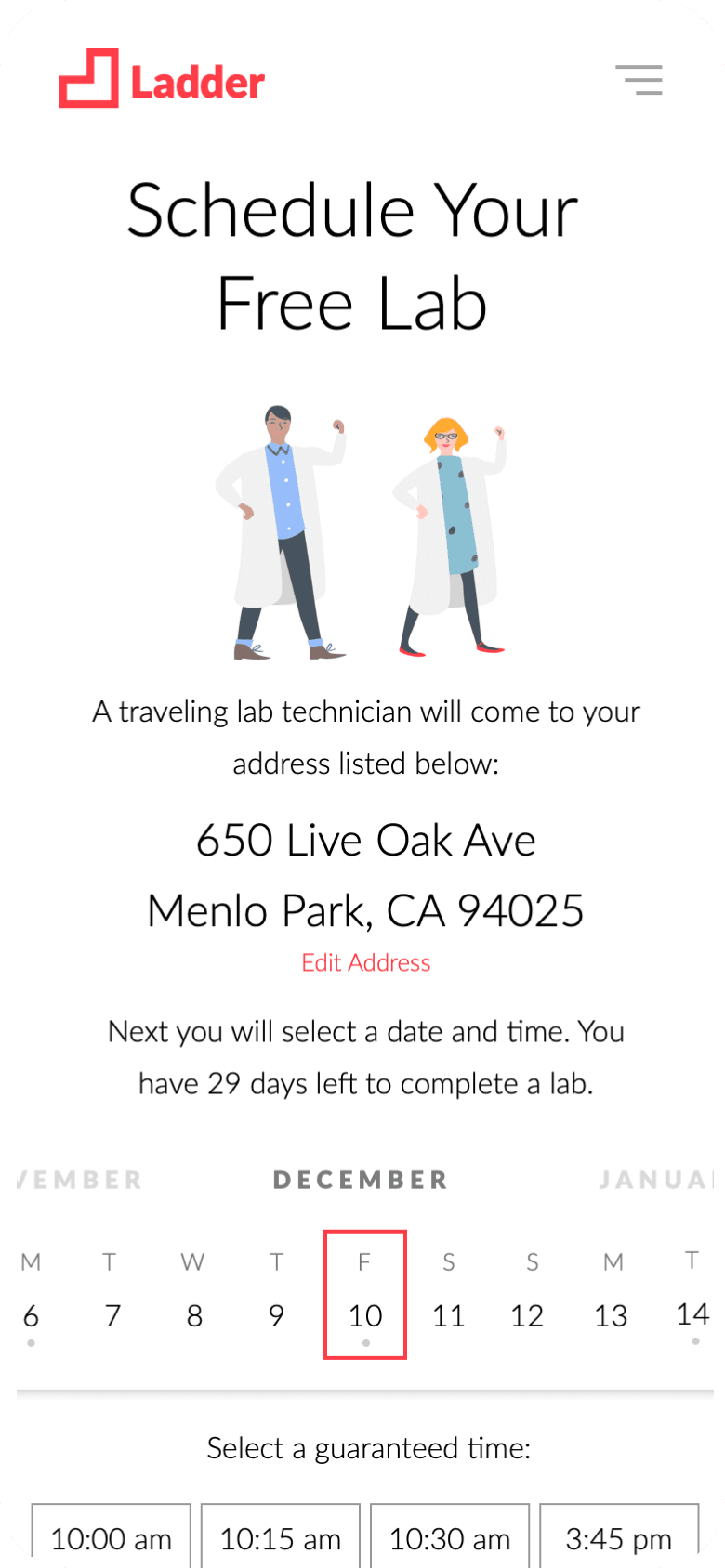

To understand how things got to where they are, it's helpful to first rewind and share a brief history. It all began with offering lab work through mobile technicians.

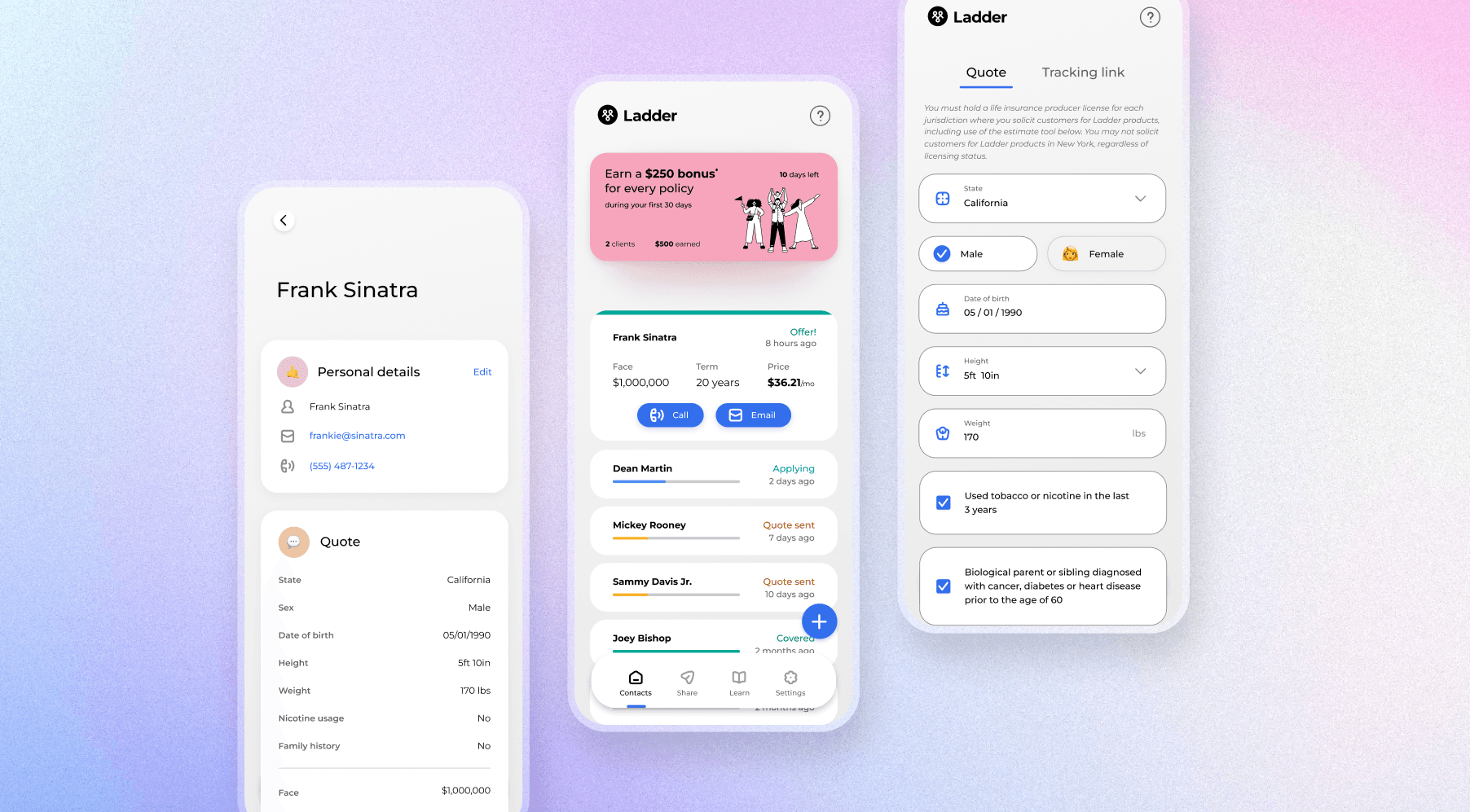

Ladder's online application worked great for young healthy people who posed low risk. People with more complicated medical histories, though, are tougher to evaluate. Early on during my time at Ladder, I built the page where users signed up for a mobile technician visit.

This cheek swab option was less invasive, easier to do on their own time, and helped us reduce the number of labs needed. I was responsible for designing the entire experience. This included a process to request the kit online, the design of the packaging, the instructions, and even the process for completing and shipping it.

For a startup our size, this meant we were hand assembling and shipping these kits to our users.

The spit kit proved to be a success, with conversion improving notably. As traffic continued to increase, however, this soon became unsustainable. So I signed an agreement with a vendor who could custom print, assemble and ship the packages.

The test involved poking your finger to provide a few drops of blood, and was a more robust indicator of health. This "spot kit" had many of the same conversion improvements as the spit kit.

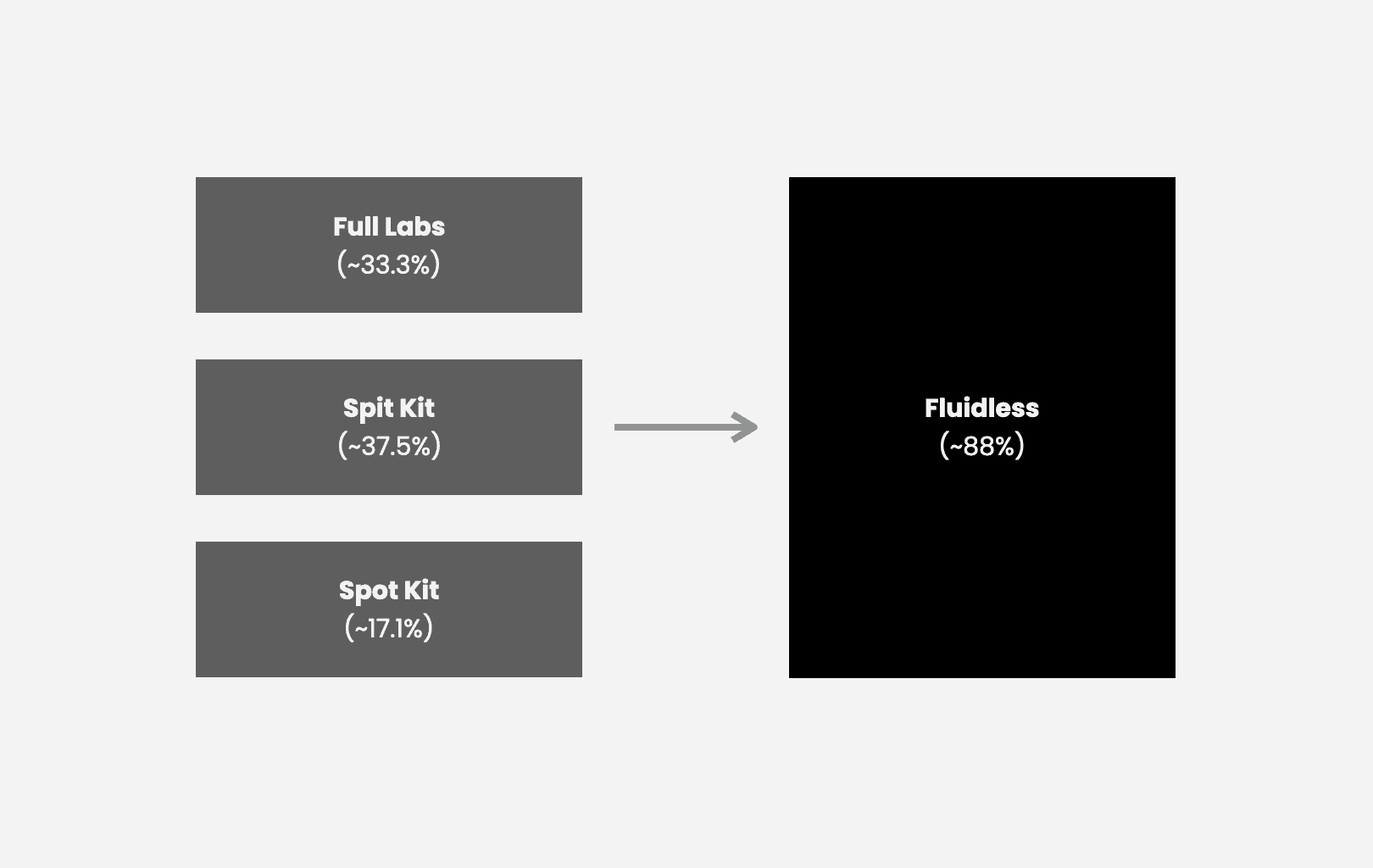

The volume of Ladder traffic finishing a life insurance application was already small. Splitting this traffic into 3 experiences made it extremely difficult to continuously test, evaluate, and improve. In fact, a single A/B experiment could run for months without reaching significance.

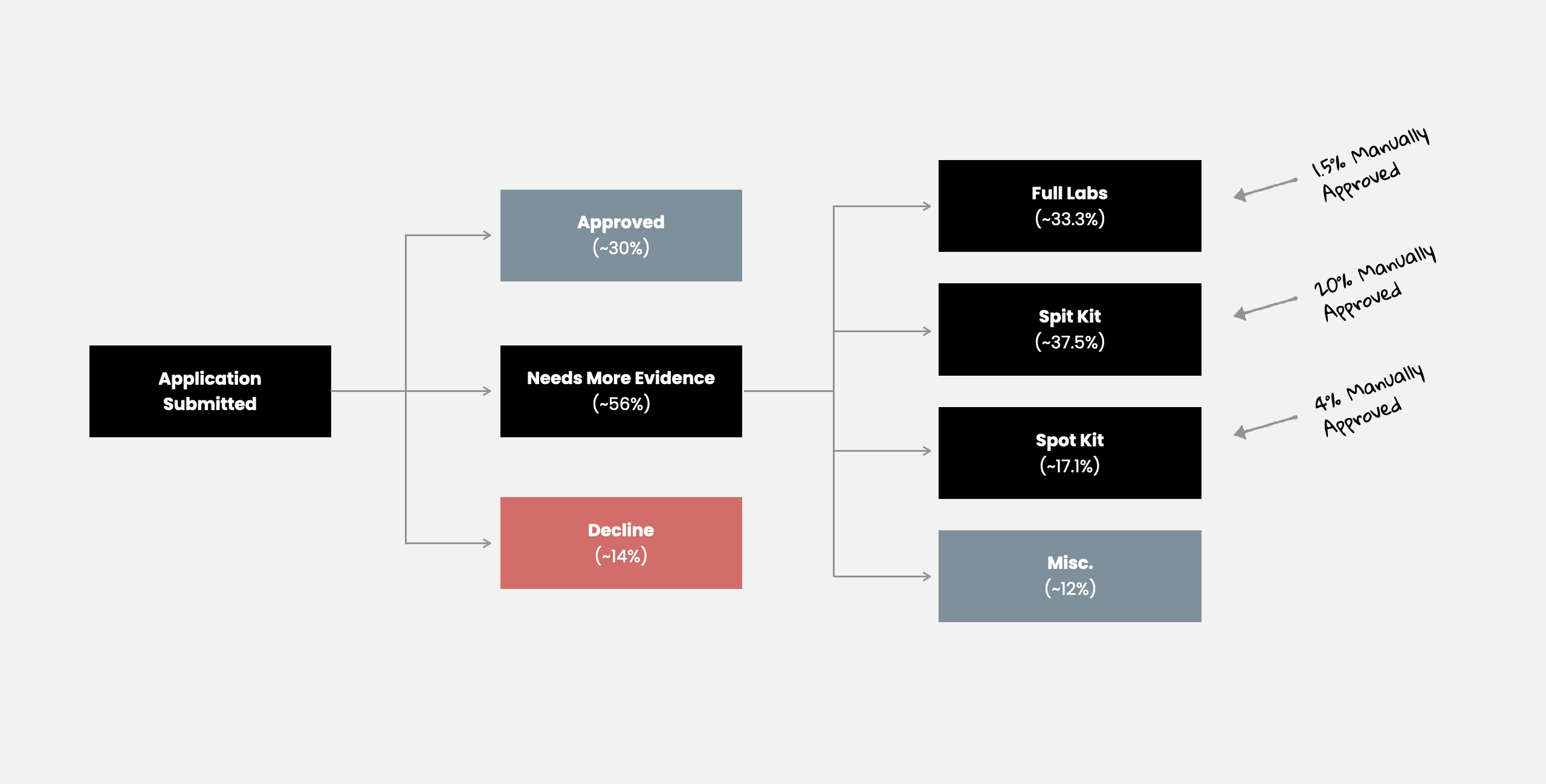

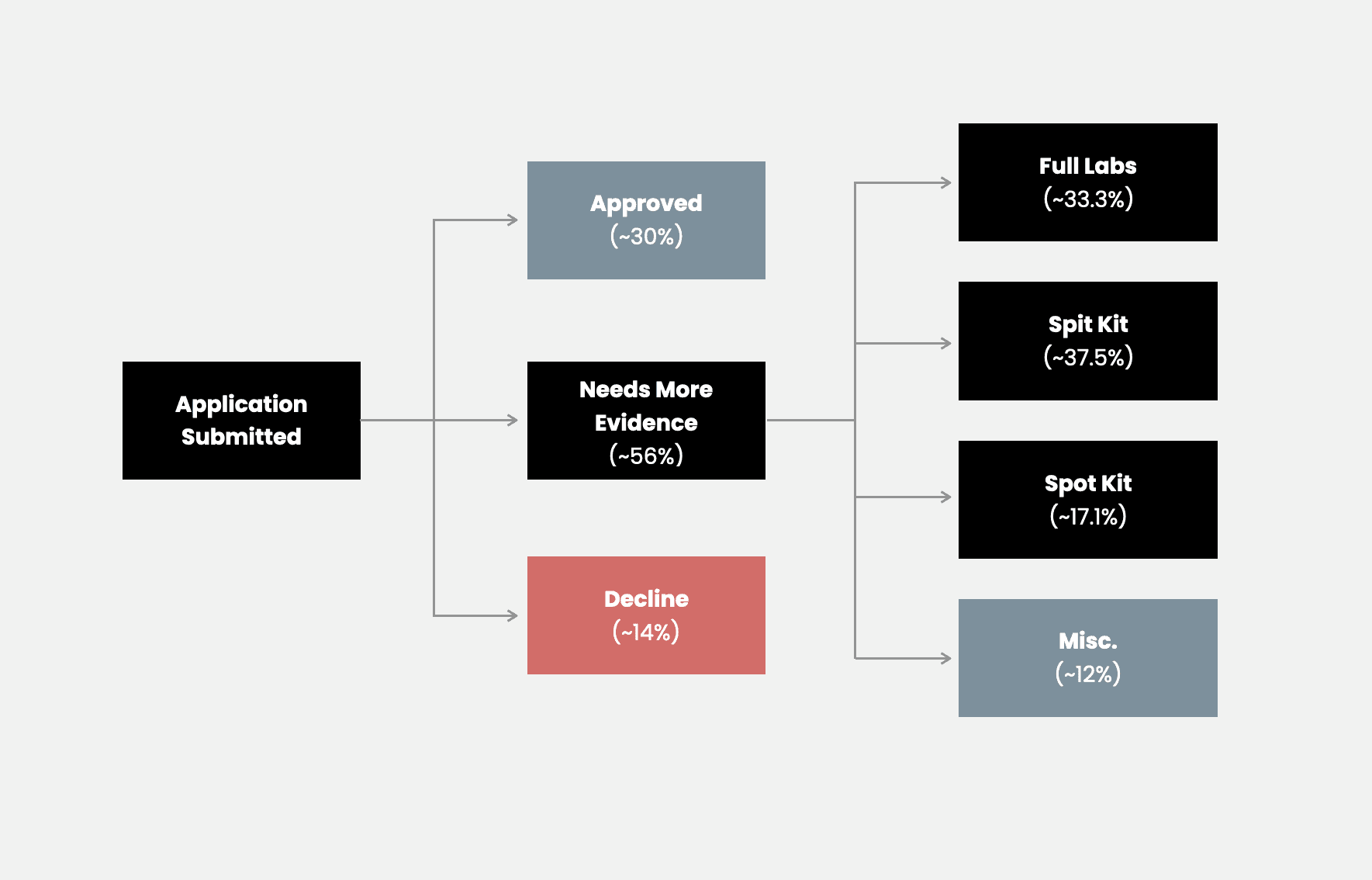

In order to improve performance, I first analyzed how traffic branched at this point in our product.

Not only that, the volume of users needing additional evidence had grown from a small portion to over half of all traffic.

As a result, more decisions were being made by simply having humans (underwriters) evaluate the applicant's existing data. However, most applications never reached a human due to the user never completing the necessary requirements.

The thought occurred, what if we just removed all of it?

If we could instead, push every application to be manually reviewed, we suspected we could increase the amount of offers given. As long as 7% or more got coverage, it would be a win.

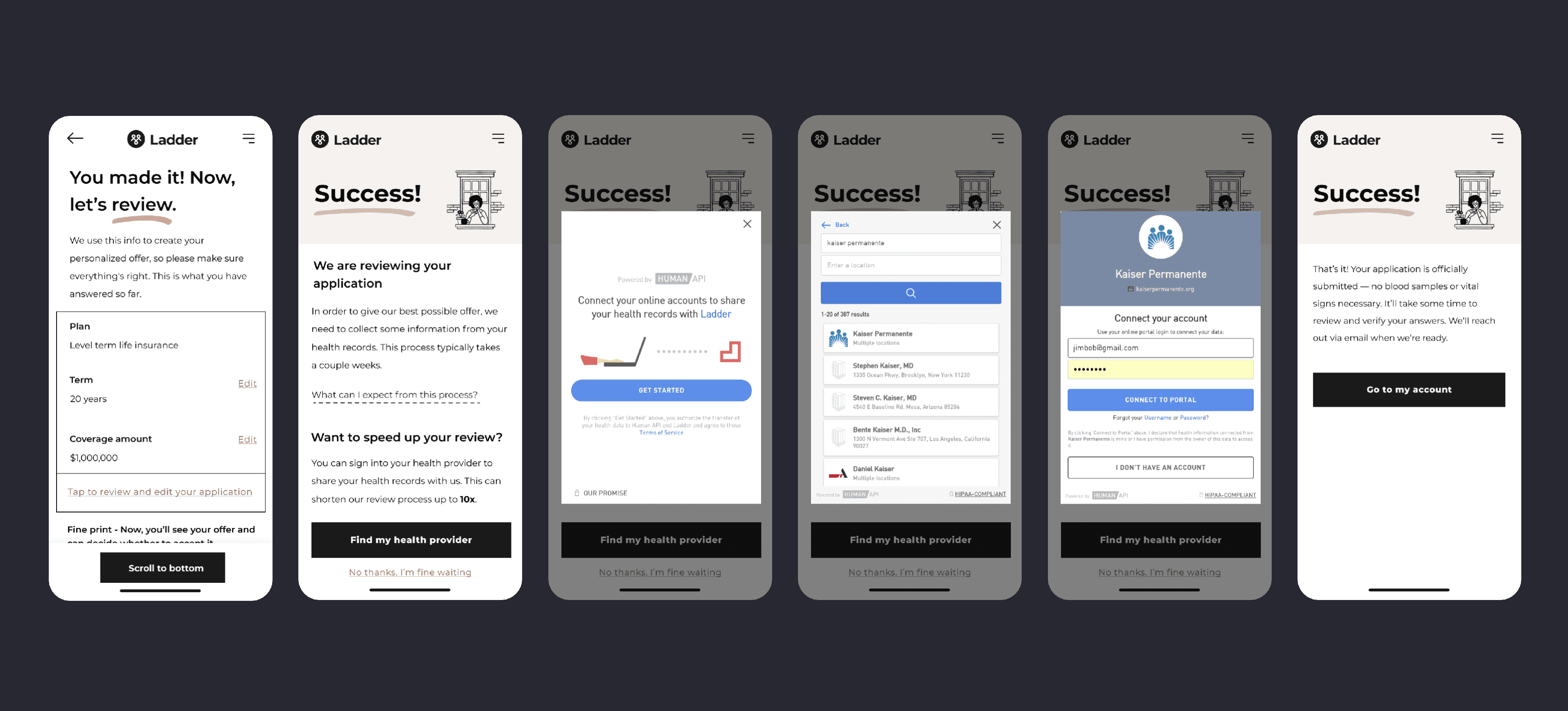

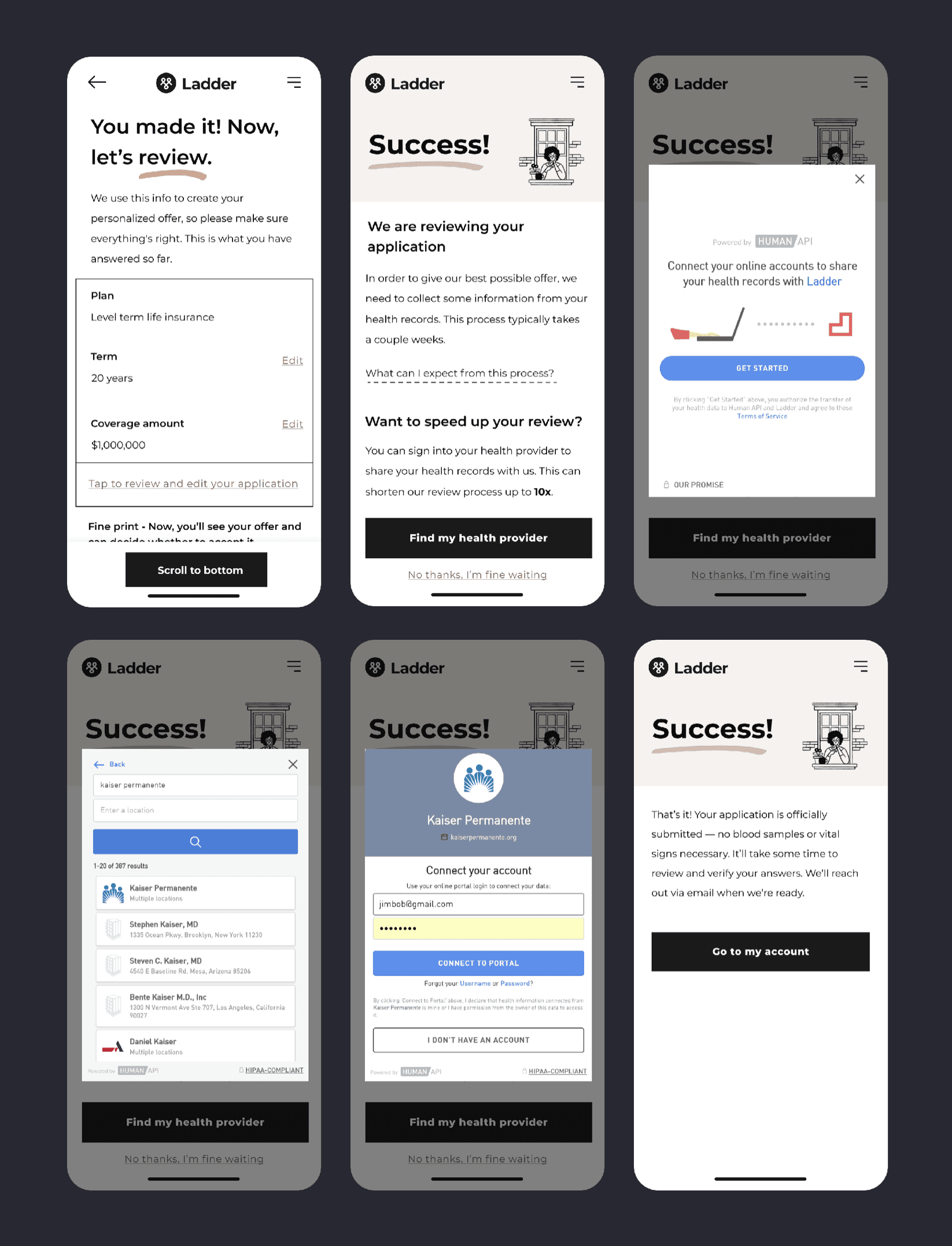

As an alternative to a lab, a doctor's report was helpful for evaluating an applicant. We discovered that these requests often would not return successfully, resulting in fewer offers given. If we could encourage users to connect their electronic health record, we might be able to further increase our chance for success.

I built out this new user flow and implemented email communications for potential drop offs.

A change this large required comprehensive consideration of every dependency. Through numerous conversations I ensured we were prepared for potential shifts in: traffic performance, partner payments, underwriter workload, support team outreach, kit manufacturing reduction, finance and accounting, and many more.

While we had hoped for an even greater improvement, we knew this was a huge step closer to our goal of helping people get life insurance entirely online.

I'd love to hear from you.

Get in touch